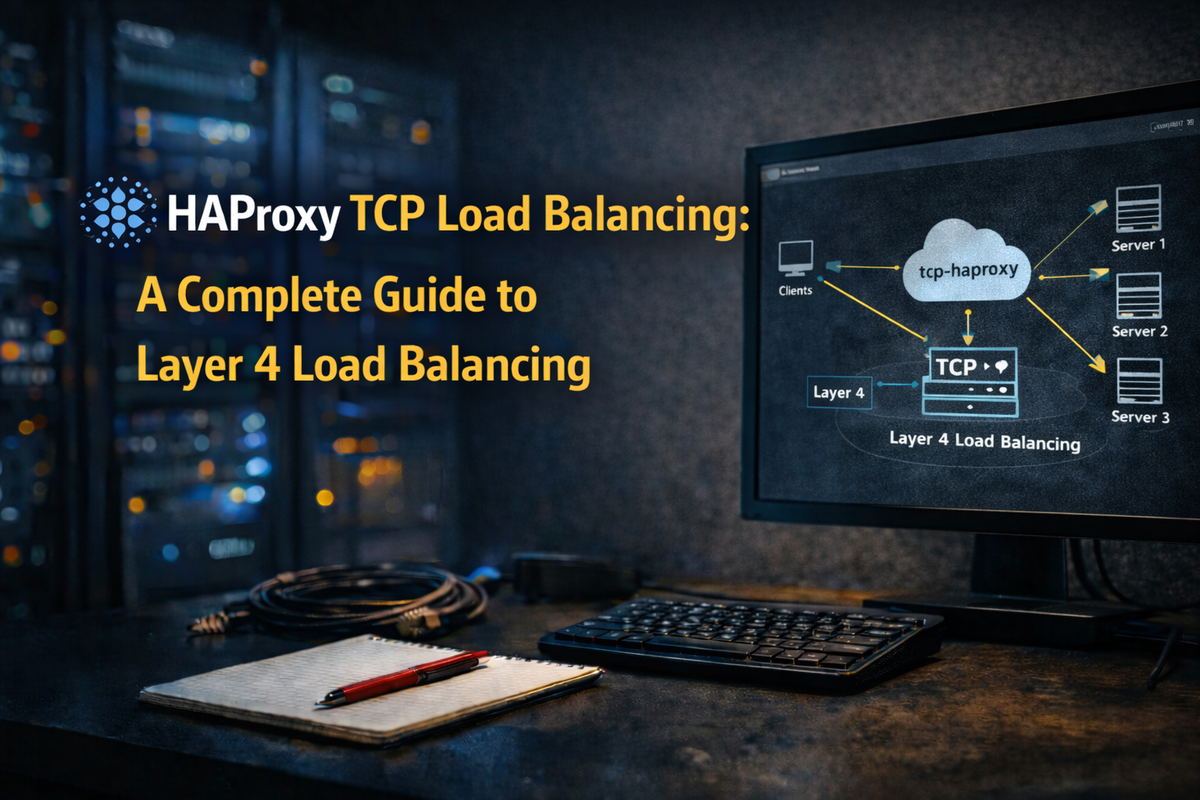

HAProxy TCP Load Balancing: A Complete Guide to Layer 4 Load Balancing

HAProxy is one of the most powerful and reliable load balancers available. While it's often associated with HTTP load balancing, HAProxy excels at Layer 4 (TCP) load balancing for any TCP-based protocol. This guide covers everything you need to know about TCP load balancing with HAProxy.

Why TCP Load Balancing?

Layer 4 load balancing operates at the transport layer, making routing decisions based on IP addresses and ports without inspecting the actual content. This approach offers several advantages:

- Protocol agnostic: Works with any TCP-based protocol (databases, message queues, custom protocols)

- Lower latency: No content inspection means faster routing decisions

- TLS passthrough: End-to-end encryption without termination at the load balancer

- Simplicity: Fewer moving parts compared to Layer 7 configuration

Installation

On Debian/Ubuntu:

apt update

apt install haproxy

On RHEL/CentOS:

yum install haproxy

# Or for newer versions from the official repository

yum install https://repo.haproxy.org/rpm/haproxy-2.8-rhel-8.rpm

yum install haproxy28z

Basic TCP Load Balancer Configuration

Let's start with a simple TCP load balancer for a database cluster:

# /etc/haproxy/haproxy.cfg

global

log /dev/log local0

log /dev/log local1 notice

chroot /var/lib/haproxy

stats socket /run/haproxy/admin.sock mode 660 level admin

stats timeout 30s

user haproxy

group haproxy

daemon

# Performance tuning

maxconn 50000

tune.ssl.default-dh-param 2048

defaults

log global

mode tcp

option tcplog

option dontlognull

timeout connect 10s

timeout client 30s

timeout server 30s

retries 3

frontend mysql_frontend

bind *:3306

default_backend mysql_servers

backend mysql_servers

balance roundrobin

option mysql-check user haproxy

server mysql1 192.168.1.10:3306 check

server mysql2 192.168.1.11:3306 check

server mysql3 192.168.1.12:3306 check

Load Balancing Algorithms

HAProxy supports multiple load balancing algorithms for TCP mode:

Round Robin

Distributes requests evenly across all servers:

backend servers

balance roundrobin

server s1 192.168.1.10:5432 check weight 1

server s2 192.168.1.11:5432 check weight 2 # Gets 2x traffic

server s3 192.168.1.12:5432 check weight 1

Least Connections

Sends traffic to the server with fewest active connections:

backend servers

balance leastconn

server s1 192.168.1.10:5432 check

server s2 192.168.1.11:5432 check

server s3 192.168.1.12:5432 check

Source IP Hash

Ensures the same client always connects to the same server (session persistence):

backend servers

balance source

hash-type consistent

server s1 192.168.1.10:5432 check

server s2 192.168.1.11:5432 check

server s3 192.168.1.12:5432 check

First Available

Uses the first server with available connection slots:

backend servers

balance first

server s1 192.168.1.10:5432 check maxconn 100

server s2 192.168.1.11:5432 check maxconn 100

Health Checks

Proper health checking is crucial for high availability.

Basic TCP Check

backend servers

option tcp-check

server s1 192.168.1.10:5432 check

Protocol-Specific Health Checks

MySQL/MariaDB

backend mysql_servers

option mysql-check user haproxy

server mysql1 192.168.1.10:3306 check

Create the HAProxy user in MySQL:

CREATE USER 'haproxy'@'%';

FLUSH PRIVILEGES;

PostgreSQL

backend postgres_servers

option pgsql-check user haproxy

server pg1 192.168.1.10:5432 check

Redis

backend redis_servers

option tcp-check

tcp-check connect

tcp-check send PING\r\n

tcp-check expect string +PONG

server redis1 192.168.1.10:6379 check

Custom TCP Check

backend custom_servers

option tcp-check

tcp-check connect port 8080

tcp-check send "GET /health HTTP/1.1\r\nHost: localhost\r\n\r\n"

tcp-check expect status 200

server app1 192.168.1.10:8080 check

Health Check Parameters

backend servers

default-server inter 3s fall 3 rise 2

server s1 192.168.1.10:5432 check

server s2 192.168.1.11:5432 check inter 5s fall 2 rise 3

inter: Interval between checks (default: 2s)fall: Number of failed checks before marking server downrise: Number of successful checks before marking server up

Connection Management

Maximum Connections

global

maxconn 50000

frontend db_frontend

bind *:5432

maxconn 10000

default_backend db_servers

backend db_servers

server db1 192.168.1.10:5432 check maxconn 500

server db2 192.168.1.11:5432 check maxconn 500

Connection Queuing

backend servers

fullconn 1000

server s1 192.168.1.10:5432 check maxconn 100 maxqueue 50

Connection Timeouts

defaults

timeout connect 5s # Time to establish connection to server

timeout client 60s # Inactivity timeout on client side

timeout server 60s # Inactivity timeout on server side

timeout tunnel 1h # Timeout for tunneled connections (WebSocket, etc.)

timeout client-fin 30s # Timeout for client FIN

timeout server-fin 30s # Timeout for server FIN

For long-lived database connections:

defaults

timeout client 3600s

timeout server 3600s

High Availability Patterns

Active-Passive with Backup Servers

backend db_servers

balance roundrobin

server primary1 192.168.1.10:5432 check

server primary2 192.168.1.11:5432 check

server backup1 192.168.1.20:5432 check backup

server backup2 192.168.1.21:5432 check backup

Read/Write Splitting

For database clusters with a primary and replicas:

frontend mysql_frontend

bind *:3306

bind *:3307

# Port 3306 -> Write (Primary)

# Port 3307 -> Read (Replicas)

use_backend mysql_write if { dst_port 3306 }

use_backend mysql_read if { dst_port 3307 }

backend mysql_write

option mysql-check user haproxy

server primary 192.168.1.10:3306 check

backend mysql_read

balance leastconn

option mysql-check user haproxy

server replica1 192.168.1.11:3306 check

server replica2 192.168.1.12:3306 check

server primary 192.168.1.10:3306 check backup # Fallback to primary

Graceful Server Maintenance

backend servers

server s1 192.168.1.10:5432 check

server s2 192.168.1.11:5432 check drain # Stop accepting new connections

Use the stats socket to manage servers dynamically:

# Disable a server (existing connections preserved)

echo "disable server servers/s1" | socat stdio /run/haproxy/admin.sock

# Set server to drain mode

echo "set server servers/s1 state drain" | socat stdio /run/haproxy/admin.sock

# Re-enable a server

echo "enable server servers/s1" | socat stdio /run/haproxy/admin.sock

# Check server status

echo "show servers state" | socat stdio /run/haproxy/admin.sock

TLS Passthrough

For end-to-end encryption without terminating TLS at the load balancer:

frontend tls_frontend

bind *:443

mode tcp

option tcplog

default_backend tls_servers

backend tls_servers

mode tcp

balance roundrobin

server s1 192.168.1.10:443 check

server s2 192.168.1.11:443 check

SNI-Based Routing

Route to different backends based on the TLS SNI header:

frontend tls_frontend

bind *:443

mode tcp

tcp-request inspect-delay 5s

tcp-request content accept if { req_ssl_hello_type 1 }

use_backend app1_servers if { req_ssl_sni -i app1.example.com }

use_backend app2_servers if { req_ssl_sni -i app2.example.com }

default_backend default_servers

backend app1_servers

mode tcp

server app1 192.168.1.10:443 check

backend app2_servers

mode tcp

server app2 192.168.1.20:443 check

Client IP Preservation

PROXY Protocol

The PROXY protocol passes client information through TCP load balancers:

frontend tcp_frontend

bind *:5432

default_backend tcp_servers

backend tcp_servers

server s1 192.168.1.10:5432 check send-proxy-v2

The backend server must support PROXY protocol. For HAProxy as the backend:

frontend backend_frontend

bind *:5432 accept-proxy

# ...

Logging

TCP-Specific Logging

global

log 127.0.0.1:514 local0 info

defaults

mode tcp

option tcplog

log global

# Custom TCP log format

frontend tcp_frontend

bind *:5432

log-format "%ci:%cp [%t] %ft %b/%s %Tw/%Tc/%Tt %B %ts %ac/%fc/%bc/%sc/%rc %sq/%bq"

Log format variables for TCP:

%ci: Client IP%cp: Client port%ft: Frontend name%b: Backend name%s: Server name%Tw: Time waiting in queue (ms)%Tc: Time to connect to server (ms)%Tt: Total time (ms)%B: Bytes read from server%ts: Termination state

Configure rsyslog:

# /etc/rsyslog.d/haproxy.conf

$ModLoad imudp

$UDPServerRun 514

local0.* /var/log/haproxy.log

Statistics and Monitoring

Stats Page

frontend stats

bind *:8404

mode http

stats enable

stats uri /stats

stats refresh 10s

stats admin if LOCALHOST

Prometheus Metrics

frontend stats

bind *:8404

mode http

http-request use-service prometheus-exporter if { path /metrics }

Complete Production Example

Here's a complete configuration for load balancing a PostgreSQL cluster:

# /etc/haproxy/haproxy.cfg

global

log /dev/log local0

log /dev/log local1 notice

chroot /var/lib/haproxy

stats socket /run/haproxy/admin.sock mode 660 level admin expose-fd listeners

stats timeout 30s

user haproxy

group haproxy

daemon

maxconn 100000

nbthread 4

cpu-map auto:1/1-4 0-3

# Security

ssl-default-bind-ciphers ECDHE-ECDSA-AES128-GCM-SHA256:ECDHE-RSA-AES128-GCM-SHA256

ssl-default-bind-options ssl-min-ver TLSv1.2 no-tls-tickets

defaults

log global

mode tcp

option tcplog

option dontlognull

option log-health-checks

timeout connect 5s

timeout client 3600s

timeout server 3600s

timeout check 3s

retries 3

option redispatch

default-server inter 3s fall 3 rise 2

# Stats frontend

listen stats

bind *:8404

mode http

stats enable

stats uri /stats

stats refresh 10s

stats admin if LOCALHOST

http-request use-service prometheus-exporter if { path /metrics }

# PostgreSQL Primary (Read/Write)

frontend pg_write

bind *:5432

default_backend pg_primary

# ACL for connection limiting

acl too_many_conns fe_conn gt 5000

tcp-request connection reject if too_many_conns

backend pg_primary

option pgsql-check user haproxy

server pg-primary 192.168.1.10:5432 check

server pg-standby1 192.168.1.11:5432 check backup

# PostgreSQL Replicas (Read-Only)

frontend pg_read

bind *:5433

default_backend pg_replicas

backend pg_replicas

balance leastconn

option pgsql-check user haproxy

server pg-standby1 192.168.1.11:5432 check

server pg-standby2 192.168.1.12:5432 check

server pg-primary 192.168.1.10:5432 check backup # Fallback

# Redis Cluster

frontend redis

bind *:6379

default_backend redis_servers

backend redis_servers

balance first

option tcp-check

tcp-check connect

tcp-check send "PING\r\n"

tcp-check expect string +PONG

tcp-check send "INFO replication\r\n"

tcp-check expect string role:master

tcp-check send "QUIT\r\n"

tcp-check expect string +OK

server redis1 192.168.1.20:6379 check

server redis2 192.168.1.21:6379 check

server redis3 192.168.1.22:6379 check

Validation and Testing

Configuration Validation

haproxy -c -f /etc/haproxy/haproxy.cfg

Load Testing

# Test TCP connection throughput

for i in {1..100}; do

nc -z -w1 haproxy.example.com 5432 &

done

wait

# PostgreSQL specific

pgbench -h haproxy.example.com -p 5432 -c 50 -j 4 -T 60 mydb

Troubleshooting

Common Issues

- Connection timeouts: Increase

timeout serverandtimeout client - Server marked DOWN: Check health check configuration and network connectivity

- Connection refused: Verify backend servers are listening and firewall rules

- Slow connections: Check

balancealgorithm and server capacity

Debug Mode

# Run HAProxy in debug mode

haproxy -d -f /etc/haproxy/haproxy.cfg

# Check runtime status

echo "show info" | socat stdio /run/haproxy/admin.sock

echo "show stat" | socat stdio /run/haproxy/admin.sock

echo "show errors" | socat stdio /run/haproxy/admin.sock

Next Steps

This post covered the fundamentals of TCP load balancing with HAProxy. In the next post, we'll explore DNS load balancing with HAProxy, which allows you to load balance DNS queries across multiple resolvers.

Summary

HAProxy's TCP mode provides a robust foundation for load balancing any TCP-based service. Key takeaways:

- Use

mode tcpfor Layer 4 load balancing - Choose the right balancing algorithm for your use case

- Implement proper health checks for each protocol

- Configure appropriate timeouts for your workload

- Use the stats socket for runtime management

- Enable logging for troubleshooting and monitoring

TCP load balancing is the building block for highly available infrastructure, whether you're running databases, message queues, or custom services.